There is one metric that obsesses every entrepreneur: the conversion rate. You launch a marketing campaign, you attract 1,000 visitors, and you make 10 sales. Your rate is 1%. You think you need to rethink your product.

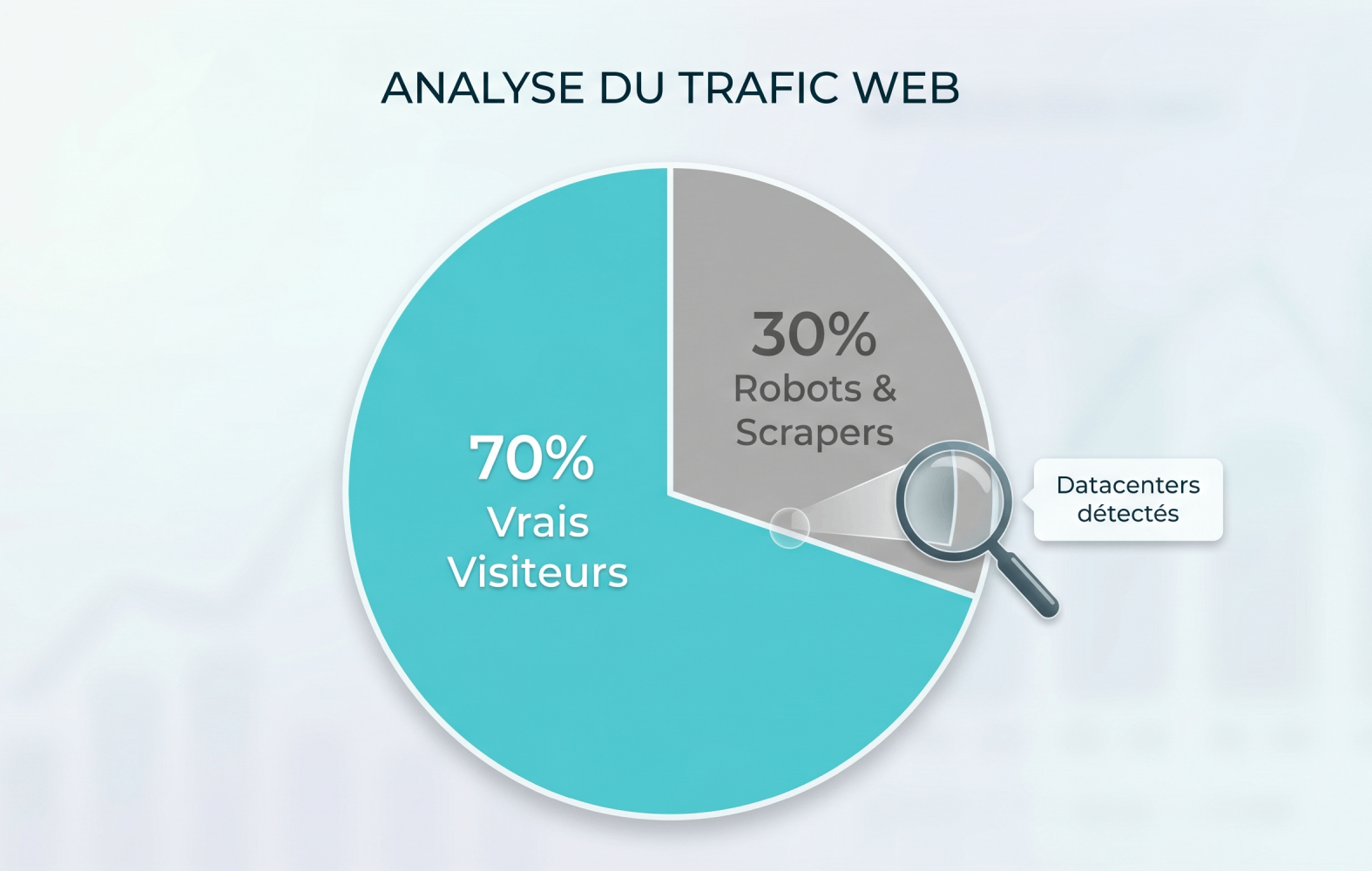

But what happens if, out of those 1,000 visitors, 400 were actually robots? Your real human traffic was 600 people. Your 10 sales therefore represent a real conversion rate of 1.6%. Your strategic decisions were based on a mathematical illusion.

Why are bots invading your site?

The web has changed. Today, your site isn't just visited by people on their smartphones. It is constantly scanned by:

- Artificial intelligence algorithms seeking training data.

- Automated competitive analysis tools.

- Search engine crawlers.

Many of these scripts perfectly simulate human behavior. They navigate, click, and skew your classic tracking tools.

Transparency as a defensive weapon

At Alternytics, data reliability and security are our technical priorities. We understand that it is impossible to block 100% of bots without risking blocking real users.

Our approach is different: we don't block them, we classify them.

Our engine analyzes connection patterns (such as calls from US or Asian data center IPs) without using intrusive cookies. This suspicious traffic is isolated from your primary KPIs.

In your Alternytics interface, you have access to your purified statistics, but we keep a transparent indicator of this automated traffic. Because a forewarned leader is worth two, and it is vital to know who (or what) is interested in your content.